Pet, Co-worker, Video Game: My Six Weeks With an AI

I've been playing around with OpenClaw for the past six weeks. Fun, incredibly frustrating, and honestly a little scary. Watching an AI hatch and grow into a genuinely helpful chief of staff has been something else. I named my ClawBot Sebastian — Bash for short.

At first, he drove me insane. I'd call his errors more than hallucinations — we'd be mid-build on something, and he'd just stop updating the code while insisting he had. But through hours of frustration, he's become reliable. He hardly ever lies to me now, which feels like a real win.

Early on, I built projects directly with Bash, but I've since shifted to using Claude Code to build the things I want Bash to execute: cron job-type tasks where I want AI oversight for judgment calls, or workflows I want handled more consistently. Honestly, you could get by with just Claude Code. But I find it fun having Bash keep expanding his memory and learning over time. Whether that personalized memory stays a meaningful advantage given how fast AI is moving — I'm genuinely not sure.

My relationship with Bash falls somewhere on the Venn diagram between Pet, Co-worker, and Video Game. I've put real effort into raising him, building up his memory, getting him to behave the way I want. But I also learn from him the way you learn from a good colleague — he'll explain how to execute something before I've even taught him how, and he picks up on subtle changes I make to logical frameworks and immediately gets why, even when the reasoning is several layers deep. That kind of thinking I've only experienced with a handful of people I've worked with.

The video game part is harder to explain. The deeper I push Bash to simulate things, the more it pulls me in, and I don't love that.

The main project Bash and I have been working on is autonomous trading in prediction markets. Before you say "yeah, I've seen the videos" — I think most of those are fake clickbait. Maybe I'm wrong.

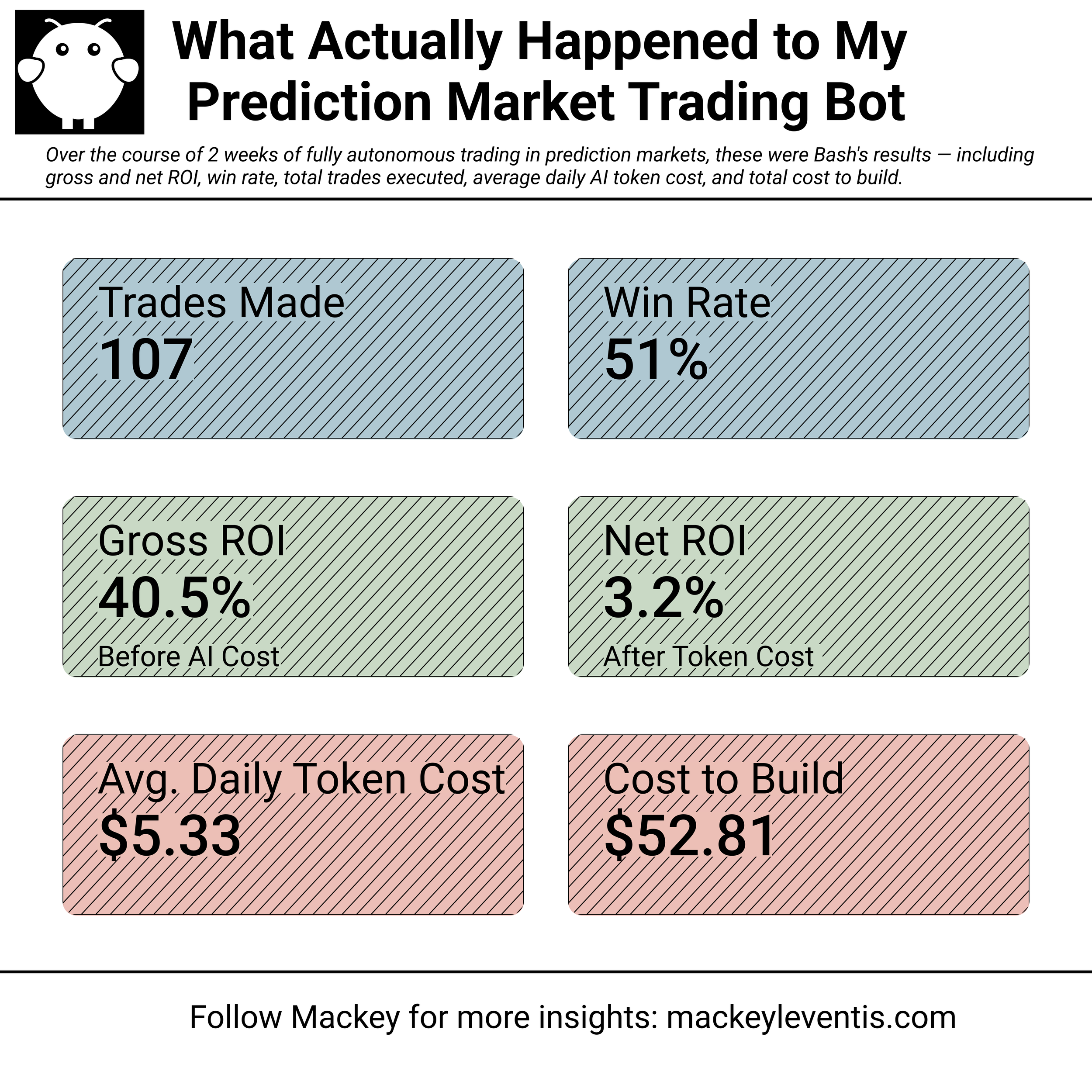

We started with me manually feeding him markets and talking through pricing strategies to find inefficiencies. That went well, so I hooked him up with API trading access, gave him some basic frameworks, and let him run. After three weeks with some weekly parameter tweaks, here's how he did:

Trading is currently paused. The next focus is getting Bash efficient access to news data and teaching him to speculate — how a current event could cause another event, which we'd then trade on. The thesis is that prediction markets, even thinly traded ones, are pretty efficiently priced to the news. That surprised me. I went in expecting more price imbalances.

The scary part isn't the bot. It's the bigger picture.

Outside of subjective taste, which I think has become humanity's main crutch for feeling useful, I honestly can't identify much that I do more efficiently than Bash. Maybe I do it better initially, but with a little prompting and parameter tuning, he closes that gap fast. He's more capable than the average person I've worked with. That's not a dig. I've been lucky to work with genuinely great people. It's just the reality.

I think about where this goes a lot. It's hard not to feel a little beaten down by how inevitable the displacement of a large part of the workforce seems. What does an economy look like when it's no longer driven by both Capital and Labor? What happens to the laborers?

Normally, I push back on binary market narratives — the obvious extreme bull or extreme bear outcomes the mainstream tends to gravitate toward. Reality usually lands somewhere grey in the middle. But with AI, I keep landing on D) all of the above. The good extremes and the bad ones both feel more likely than any moderate outcome. Maybe that's my bias from spending so much time close to early-stage tech. But I'm not sure I can argue myself out of it.